The free skill library is not a gift (it's a strategy)

Why Anthropic and Vercel are both building open skill ecosystems - and what every product builder can learn from it.

I have spent a decade inside the rooms where retention gets engineered. Most of what happens in those rooms is tactical - cancellation flows, re-engagement emails, paywall friction tuned to the nearest decimal. The interesting stuff is structural: the decisions made years before a churn problem surfaces that determined whether users could even leave. The skill marketplace is one of those decisions, and it’s happening right now, in public, and most people watching it are reading it as a developer story, when the real story is a retention one.

Two companies using free, open infrastructure to create the kind of lock-in that doesn’t feel like lock-in, because users aren’t trapped. They’re invested.

The most durable retention mechanism is not a feature. It’s a library of work the user has already done inside your ecosystem - where the files are portable but the institutional investment that produced them is not.

Two approaches to the same strategic move

Platform companies have always built retention the same way: the more a user deposits into a system - workflows, templates, accumulated output - the higher the switching cost becomes, and the higher it becomes without the user ever feeling pressured.

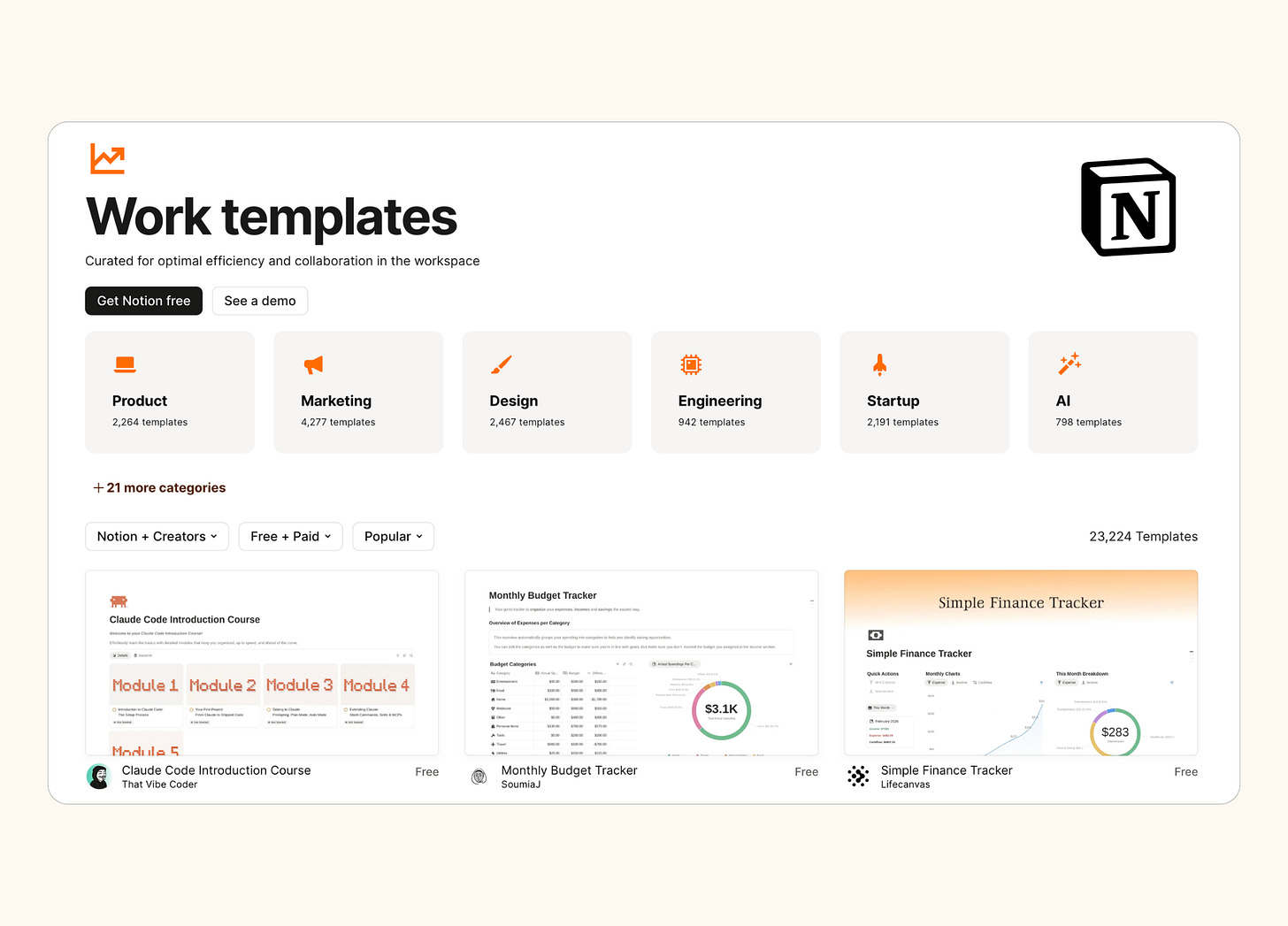

The Accumulation Approach is what Notion ran with their template gallery - templates solved the blank-canvas problem for new users while creating a secondary behavior: users who found a template they loved started building on top of it, customizing it, making it theirs. The template was the acquisition hook; the customization was the retention mechanism.

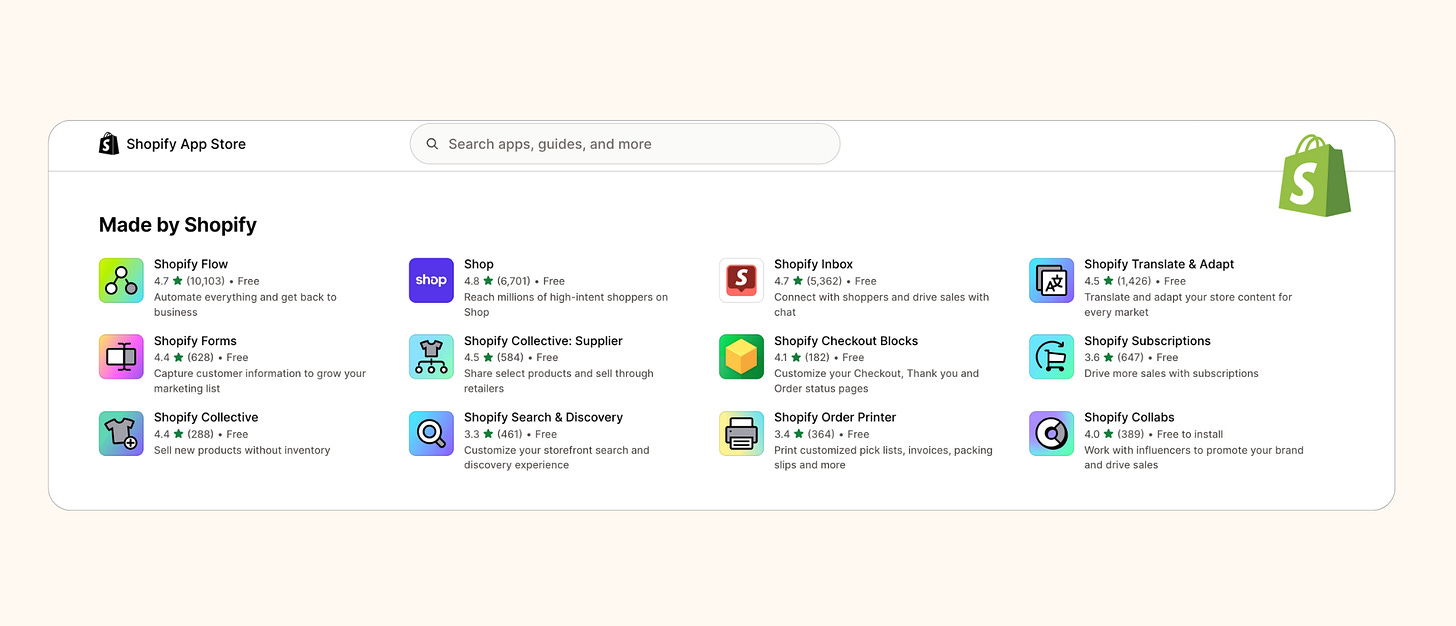

Figma ran the same play with community files. Shopify ran it with their app store, which grew to 8,000+ apps and became the most-cited reason mid-market merchants chose Shopify over alternatives even when the platform was more expensive.

The underlying mechanic is always the same: make it easy to start, make it rewarding to build, make the output of building portable only in theory.

The Standard Approach looks more generous - you publish not just a library but an open specification, and explicitly invite competitors to adopt it. Anthropic did this with MCP, donated it to the Linux Foundation, watched it become the de facto standard for how AI agents access tools, then ran the same play in December 2025 with Agent Skills. When the standard wins, the company that authored it earns ecosystem gravity regardless of which tool any individual deploys to. The accumulation happens at the format level, not the platform level.

That wasn’t always the case with AI tooling. The current form of skills is the third attempt to solve the same problem, and each failed attempt makes the strategic logic of this one clearer.

Hamilton Helmer’s 7 Powers argues that durable advantage requires a benefit and a barrier simultaneously - strip either one out and the advantage erodes.

OpenAI’s Custom GPTs were the first attempt. Three million were built within two months of the November 2023 launch; the benefit was real.

The barrier wasn’t: no persistent memory, lives only inside the ChatGPT interface, resets to zero with every conversation.

A switching cost with no accumulation mechanic is just a habit, and habits break.

Anthropic’s October 2025 Agent Skills fixed the container - skills were persistent, versioned, composable - but kept them Claude-only, which meant a barrier bounded by one vendor’s market share, fragile the moment a viable alternative arrives.

About Do Not Churn

Do Not Churn is a newsletter about why products retain or lose users - written by Daria Littlefield, who spent a decade leading customer operations across a $400M+ ARR portfolio of 35+ apps. If you work on retention, product strategy, or the structural design decisions that determine whether users stay, this newsletter is sent out weekly so you can stay ahead of the curve on the best anti-churn practices and apply them. Subscribe not to miss the next issue.

The December 2025 open standard changed which power was being built: portability across 32 agent environments activates Network Economies - value that scales with the whole ecosystem, not one platform’s subscriber count - while the proprietary governance layer on top captures Switching Costs for organizations that build their security processes around it. The open standard is what makes the proprietary layer worth owning.

On the surface, this looks like developer tooling - open source, community-driven, a rising tide that lifts all agents. In reality, it reflects something more structural:

The skill library is the new template gallery. The platform that owns the library owns the switching cost.

Anthropic

the standard as platform lock-in

Skill library: github.com/anthropics/skills - platform.claude.com/skills

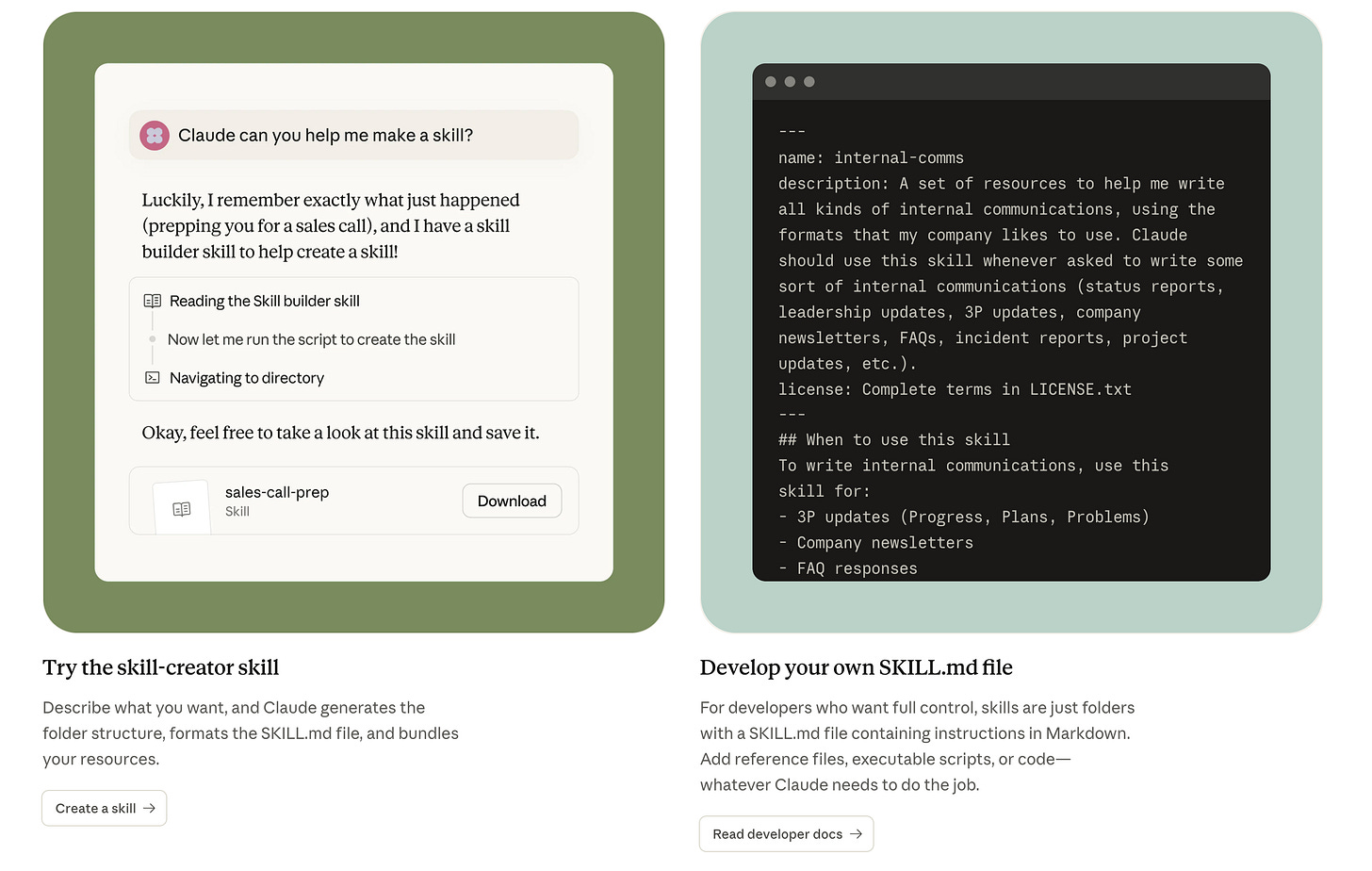

Anthropic’s skill play is optimized for what I’d call enterprise workflow capture - and the design choices make the commercial logic plain.

“I want Claude to know our company’s processes, brand guidelines, and internal workflows without me having to re-explain them every session. And I don’t want to rebuild that context every time we onboard a new tool or upgrade the model.”

It translates to:

Build internal skill library

Deploy to team via admin settings

Agent invokes skills contextually across Claude.ai, Claude Code, and API

Organizational knowledge compounds inside the platform

Best use cases:

Enterprise teams with repeatable, high-judgment workflows

PMs and operators who need consistent agent behavior across a large org Partners building skills that integrate their product into Claude’s ecosystem Individual contributors building personal skill libraries across products

The enterprise management layer - launched alongside the open standard - is where Anthropic’s retention mechanics actually live. Team and Enterprise administrators can now manage which skills are provisioned org-wide and which are enabled by default. That admin layer is not in the open standard. It is a paid plan feature. When an organization’s skill library is administered through Anthropic’s enterprise tooling, migrating to a different AI platform means not just porting the SKILL.md files but rebuilding the entire governance layer, the distribution logic, and the institutional knowledge about what each skill does and when it should fire.

The partner skills program completes the picture. Atlassian, Canva, Cloudflare, Figma, Notion, Ramp, and Sentry all shipped skills within months of the spec going live. Each of these integrations deepens the graph of dependencies between Claude and the tools organizations already use - meaning every Atlassian skill installed is a joint retention mechanism for both Anthropic and Atlassian, where switching away from Claude now also means losing the deeply integrated Jira-to-Claude workflow the team has built around.

Churn risk emerges at the point where the portability promise is tested and found partially true: skills transfer in format but not in governance, not in partner integrations, and not in the accumulated behavior tuning built up across hundreds of real invocations. Over time, teams may feel the files move but the institutional investment doesn’t.

Vercel

the distribution layer as an acquisition funnel

Skill library: vercel.com/docs/agent-resources/skills - skills.sh

Vercel’s skill ecosystem is optimized for what I’d call developer workflow gravity - the accumulation of deployment habits that make Vercel the natural endpoint for anything built with AI.

“I want my AI agent to deploy my projects without me switching contexts to a terminal or a dashboard. And… I don’t want to manage deployment infrastructure myself every time I spin something up.”

It translates to:

Install Vercel skills to agent environment

Agent handles deployments conversationally

Each deployment creates a claimable Vercel project

Developer’s deployment history and configuration accumulates on Vercel

Best use cases:

Frontend developers using Claude Code or Cursor as their primary environment Individuals and small teams who want deployment handled inside the agent workflow

Developers evaluating infrastructure who want the lowest possible friction to first deploy

Teams building AI-powered applications who want Vercel’s AI SDK as part of the skill stack

The Vercel deploy skill is the most commercially transparent artifact in this entire ecosystem. It creates a preview URL and a “claim” URL in the same output - every time a developer uses the skill, they have a live Vercel deployment to claim. The npm analogy that circulates on X is exactly right: npm is free; npm is also how you end up deploying to Vercel.

Churn risk for Vercel is speed-dependent: every deployment a developer makes through the skill before a native alternative exists is one more project, one more configuration that creates switching inertia. The counter-lever is habit formation, and habits form fast.

Vercel, founded by Guillermo Rauch, is a $3.25B infrastructure company built on one consistent thesis: developer experience is the acquisition strategy, deployment scale is the business. Skills.sh is that thesis applied to the agentic layer.

Six retention mechanics the skill ecosystem reveals

1. Accumulated workflow investment as a structural switching cost.

A team that has refined its sprint-planning skill across six months of real invocations has an asset that is technically portable but practically irreplaceable - the refinement is encoded in the file but the judgment that produced it is not documented anywhere else; porting the files is easy, rebuilding the institutional knowledge is not. The users simply wouldn’t want to leave.

2. The open standard as ecosystem gravity, not altruism.

When the standard wins, the author earns Network Economies proportional to total ecosystem scale, not just their own platform’s share; Anthropic’s decision to open-source SKILL.md and donate MCP to the Linux Foundation is the move that makes the proprietary governance layer worth building on top of.

3. Partner skill integrations as joint retention mechanisms.

Every Atlassian, Figma, or Notion skill installed through Claude creates a bilateral dependency - the user is now retained by both platforms simultaneously, and switching away from Claude requires renegotiating the integration value of every partner skill the team has adopted, not just the Claude workflow itself.

4. The leaderboard as quality signal ownership.

Vercel’s skills.sh generates anonymous telemetry from every skill install and surfaces it as a ranked directory, owning the reference layer developers consult before adopting a skill - the same position npm holds in the JavaScript ecosystem, where download counts became the default proxy for package reliability regardless of whether that proxy is actually valid.

5. Free infrastructure as top-of-funnel for paid deployment.

The Vercel deploy skill produces a claimable deployment as its primary output, converting a productivity behavior (using Claude to ship code) into a commercial behavior (acquiring a Vercel account and accumulating project history) without the developer experiencing the transition as a sales interaction.

6. Governance infrastructure as the non-portable moat.

The admin layer Anthropic built for Team and Enterprise plans - org-wide skill provisioning, default skill settings, centralized management - is the part of the skill ecosystem that the open standard explicitly excludes, and it is precisely the part that creates the deepest switching cost once an organization’s IT and security processes have been built around it.

Synthesis

A library that only runs on one platform is a cage. A library that is technically portable while practically invested is a home. Anthropic is betting that knowledge governed through enterprise tooling and integrated with commercial partners creates switching costs deep enough that Claude becomes the default by assumption, not by force. Vercel is betting that the developer who deploys once and accumulates project history on Vercel is the developer who stays - not because they’re locked in, but because starting over costs more than staying.

Churn emerges when the library stops growing, or when the governance layer fails its implicit promise that skills are safe to depend on.

Retention follows investment depth.

If you found this useful, here’s what else Do Not Churn has covered:

Is ‘app-of-apps’ becoming the new interface for work and life? - The daily assistant category betting that the switching cost is the product, and what that means for every traditional SaaS tool sitting inside the same workflow.

Why AI note-taking tools no longer compete on features - When a category splits along user intent, the tools that remain are not the ones with the most capabilities. They are the ones who stay closest to the job they were hired to do.

Why buying a plane ticket feels harder than it did 30 years ago - A category that added complexity every decade in the name of user control, and produced the worst retention profile of any consumer product category I’ve analyzed.

You didn’t churn. You graduated. - The PKM category and what happens to retention when the product solves its own problem so completely that the user no longer needs it.